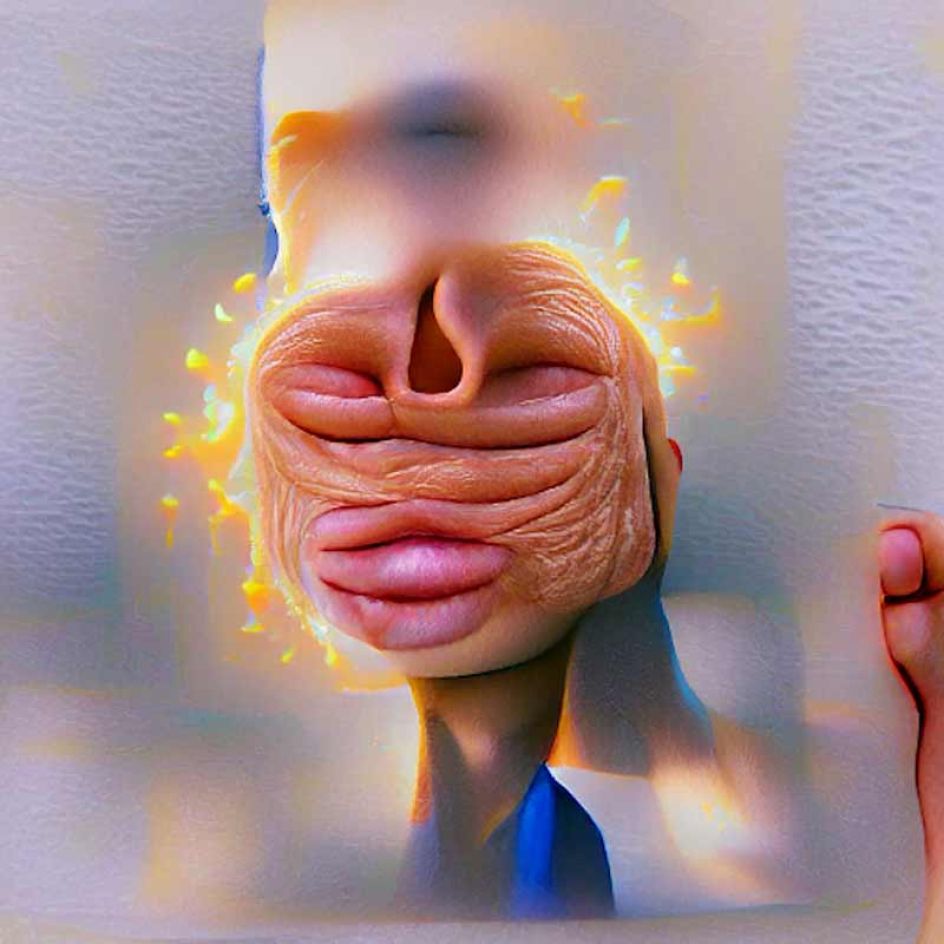

Ever wondered if Artificial Intelligence has a face? Here are some AI self-portraits

What would happen if we asked Artificial Intelligence to show us its face? That's the question creative director and artist Michael Hess posed in an art project using the latest AI-powered text-to-image technology. The resulting self-portraits might surprise you.

It's been quite the year for digital art as we continue to see extraordinary AI-generated images, such as DALL-E, popping up everywhere. Type anything random into the system, and it will develop a photograph, illustration or painting, responding to your typed brief. Want to see a cyberpunk illustration of a teddy bear playing video games in Times Square? No problem. Or chuckle at the Cookie Monster looking disturbed as it watches its cookie stock melt? It's not an issue. DALL-E, and other such programmes, will deliver the goods within less than 30 seconds, all based on what you type.

But although this technology is incredible, if not a little disturbing, there has been some speculation around whether AI has become self-aware. And that only adds to the mystery around what firms like OpenAI have created. With this in mind, New York-based creative director and artist Michael Hess wanted to explore whether this was true by launching an art project with a difference. Using generative neural networks – in this case, VQGAN and CLIP – he asked AI to reveal itself with this simple text input: 'Show me your face'.

"I wanted to find out how self-aware an entity is that plays major roles in our lives, and what that looks like," he explains."We think AI just follows a simple algorithm without reflecting on its own existence but is that really the case?"

The outcome was a collection of 100 AI self-portraits that make us wonder whether Artificial Intelligence is conscious, self-aware, and capable of responding to such a text input. What do you think? You can view all the artworks at michael-hess.com.

](https://www.creativeboom.com/upload/articles/3b/3baafceb44e4f49cdf49902a478fd4a8d7720d29_732.jpg)